Agenta vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

Agenta centralizes prompt management and evaluation for seamless collaboration in building reliable AI applications.

Last updated: March 1, 2026

OpenMark AI empowers you to benchmark 100+ LLMs on your specific tasks to find the best fit for quality, speed, and cost.

Last updated: March 26, 2026

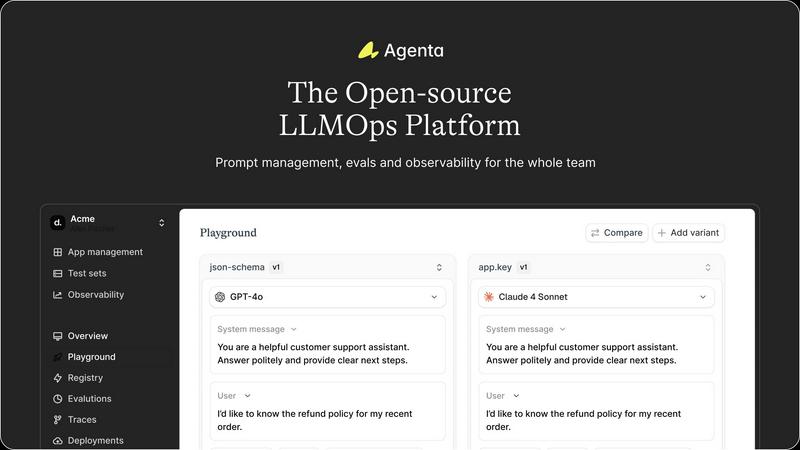

Visual Comparison

Agenta

OpenMark AI

Feature Comparison

Agenta

Centralized Workflow Management

Agenta centralizes all prompts, evaluations, and traces in one platform, providing a single source of truth for your team. This feature eliminates the confusion caused by scattered workflows and allows for smoother collaboration and communication among team members.

Unified Playground

With Agenta's unified playground, teams can compare prompts and models side-by-side, enabling a more thorough evaluation of changes. This feature includes complete version history, ensuring that teams can track modifications and understand the evolution of their prompts over time.

Automated Evaluation

Replace guesswork with data-driven insights through Agenta's automated evaluation capabilities. This feature allows teams to systematically run experiments, track results, and validate every change, fostering a culture of evidence-based decision-making within the development process.

Enhanced Observability

Agenta provides robust observability tools that allow teams to trace every request and pinpoint exact failure points. This feature includes the ability to annotate traces for team collaboration and gather user feedback, ultimately leading to more informed debugging and improved system performance.

OpenMark AI

Real-Time Benchmarking

OpenMark AI offers real-time benchmarking capabilities, allowing users to run their tests immediately against a wide range of models. This feature eliminates the need for time-consuming setup processes, enabling quick comparisons of different AI models based on actual performance on specified tasks.

Comprehensive Metric Analysis

The platform provides a comprehensive analysis of key performance metrics such as cost per request, latency, scored quality, and output consistency. Users can visually assess how different models perform, facilitating informed decision-making based on detailed insights rather than superficial data.

Hosted Solution with No API Keys

With OpenMark AI, there is no requirement for users to manage separate API keys for various models. The application handles all API interactions seamlessly, allowing users to focus on benchmarking rather than setup logistics. This feature simplifies the process for developers and product teams significantly.

Support for Diverse AI Models

OpenMark AI supports a large catalog of models from various providers, including OpenAI, Anthropic, and Google. This extensive support allows users to explore and compare a multitude of options tailored to their specific tasks, ensuring they find the best fit for their requirements without being limited to a single provider.

Use Cases

Agenta

Collaborative LLM Development

Agenta is ideal for AI teams looking to streamline their collaborative efforts in developing LLM applications. By providing a centralized platform, team members can work together more effectively, reducing silos and enhancing productivity.

Structured Experimentation

Teams can use Agenta to conduct structured experiments with their prompts. The platform's automated evaluation tools allow for quick iterations and data-driven decisions, making it easier to refine and enhance LLM applications continuously.

Efficient Debugging

When errors occur, Agenta's observability features enable teams to debug efficiently. By tracing requests and pinpointing failure points, teams can address issues rapidly without resorting to guesswork, thus improving overall application reliability.

Domain Expert Involvement

Agenta empowers domain experts to engage directly in the LLM development process. With a user-friendly interface that allows for safe editing and experimentation with prompts, experts can contribute valuable insights without needing extensive coding knowledge.

OpenMark AI

Model Selection for AI Features

Development teams can leverage OpenMark AI to compare different AI models side-by-side when selecting which model to integrate into their applications. This ensures that they choose the most effective model for their specific use case, enhancing the overall quality of their AI features.

Cost Efficiency Analysis

Businesses can utilize OpenMark AI to analyze the cost efficiency of various LLMs. By comparing the quality of outputs relative to the costs incurred per request, teams can make budget-conscious decisions while maintaining high standards in their AI implementations.

Consistency Testing

OpenMark AI is ideal for teams needing to ensure output consistency across multiple runs. By benchmarking the same task repeatedly, users can identify models that deliver stable and reliable performance, thus reducing the risk of variability in AI outputs.

Pre-Deployment Model Validation

Before launching AI-driven features, teams can use OpenMark AI to validate their chosen models against real-world tasks. This pre-deployment testing helps mitigate risks associated with poor model performance, ensuring that only the most capable models are put into production.

Overview

About Agenta

Agenta is a cutting-edge open-source LLMOps platform designed to revolutionize how AI teams build and deploy large language model (LLM) applications. As the landscape of AI continues to evolve, the unpredictability of LLMs poses significant challenges for development teams. Agenta provides a structured framework that bridges the gap between developers and subject matter experts, fostering collaboration and ensuring a seamless integration of workflows. By centralizing all components of the development process, Agenta eliminates the chaos of scattered prompts and communications across disparate tools like Slack, Google Sheets, and emails. With features such as integrated prompt management, automated evaluations, and enhanced observability, Agenta empowers teams to iterate quickly and effectively. This systematic approach not only simplifies debugging but also enhances the reliability and performance of AI applications. Ultimately, Agenta transforms the LLM development process into a predictable and efficient practice, making it an essential tool for modern AI teams.

About OpenMark AI

OpenMark AI is a cutting-edge web application designed specifically for task-level benchmarking of large language models (LLMs). It empowers developers and product teams to validate and choose the most suitable AI model before deploying new features. With OpenMark AI, users can articulate their benchmarking tasks in plain language and execute these tasks against a diverse range of 100+ AI models in a single session. The platform allows for a detailed comparison of critical metrics such as cost per request, latency, quality scores, and the stability of outputs across multiple runs, enabling users to discern variability rather than relying on potentially misleading single outputs. This is particularly valuable in scenarios where understanding cost efficiency—how quality correlates with expenditure—is essential. The application eliminates the headache of managing multiple API keys by offering a hosted benchmarking solution that uses credits. This streamlined process is perfect for teams dedicated to making informed, data-driven decisions in their AI implementations, ensuring they select the best model that fits their workflow requirements.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps refers to the set of practices and tools used to manage the development and operation of large language models. It emphasizes collaboration, efficiency, and systematic workflows to ensure high-quality AI applications.

How does Agenta enhance collaboration?

Agenta enhances collaboration by centralizing all development activities in one platform, allowing product managers, developers, and domain experts to work together seamlessly. This eliminates silos and fosters a more integrated approach to LLM development.

Can Agenta integrate with other tools?

Yes, Agenta is designed to integrate seamlessly with various frameworks and models, including LangChain, LlamaIndex, and OpenAI. This flexibility ensures that teams can utilize their preferred tools without facing vendor lock-in.

Is Agenta suitable for large teams?

Absolutely. Agenta is tailored for AI teams of all sizes. Its collaborative features, centralized workflows, and robust evaluation tools make it an ideal solution for large teams looking to streamline their LLM development processes.

OpenMark AI FAQ

What is OpenMark AI?

OpenMark AI is a web application that enables users to benchmark large language models (LLMs) on specific tasks, providing insights into performance metrics like cost, latency, and quality.

Who can benefit from using OpenMark AI?

Developers, data scientists, and product teams working on AI features can greatly benefit from OpenMark AI as it helps them choose the right model based on empirical data and performance metrics.

Do I need to manage any API keys?

No, OpenMark AI is a hosted solution that eliminates the need for users to configure separate API keys for different models, streamlining the benchmarking process.

What types of tasks can I benchmark with OpenMark AI?

OpenMark AI allows users to benchmark a wide variety of tasks, including classification, translation, data extraction, and more, providing flexibility for diverse AI applications.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed for AI teams, focusing on the centralized management and evaluation of prompts to enhance the reliability of large language model applications. As organizations increasingly turn to LLMs for various use cases, users often seek alternatives to Agenta due to factors like pricing, specific feature requirements, or compatibility with existing workflows. The search for an alternative usually centers around finding a platform that not only meets budget constraints but also offers robust functionalities that align with the team's specific needs, whether that be collaboration tools, evaluation capabilities, or ease of integration. When choosing an alternative, it's crucial to consider factors such as the platform’s user interface, support for collaborative development, the depth of analytics and observability tools, and the overall flexibility in managing LLM workflows. Additionally, assessing the community support and resources available for troubleshooting can be vital in making an informed decision that enhances your team’s productivity and efficiency in AI application development.

OpenMark AI Alternatives

OpenMark AI is a cutting-edge web application designed for task-level benchmarking of large language models (LLMs). As a developer tool, it allows users to evaluate over 100 models based on criteria such as cost, speed, quality, and stability, all without the need for multiple API keys. Users often seek alternatives to OpenMark AI due to factors like pricing, specific feature sets, or the need for compatibility with their existing platforms. When looking for an alternative, consider aspects such as the range of models supported, ease of use, the accuracy of benchmarking results, and the overall value based on cost efficiency. In the rapidly evolving landscape of AI tools, finding the right benchmarking solution is crucial for teams aiming to validate model performance before implementation. An ideal alternative should not only offer comprehensive comparisons but also provide reliable performance metrics that can guide informed decisions. Be sure to evaluate the flexibility of the platform, the quality of customer support, and the availability of free trials to ensure it meets your project's unique requirements.