Agenta vs qtrl.ai

Side-by-side comparison to help you choose the right tool.

Agenta centralizes prompt management and evaluation for seamless collaboration in building reliable AI applications.

Last updated: March 1, 2026

qtrl.ai

Scale QA with AI agents while keeping full control and governance.

Last updated: March 4, 2026

Visual Comparison

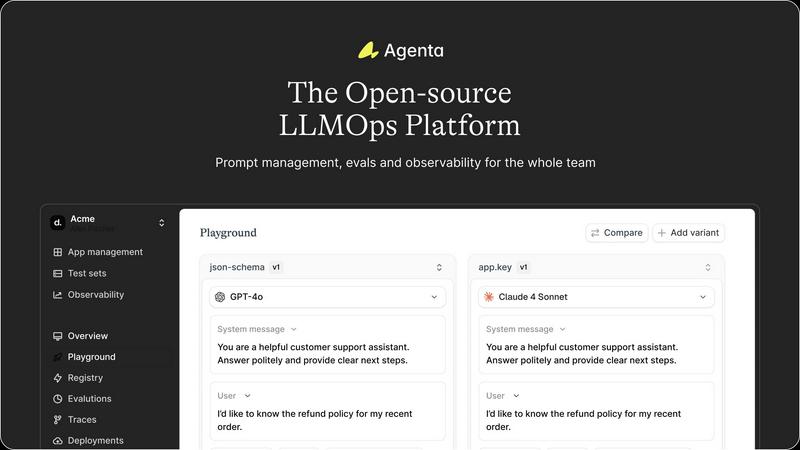

Agenta

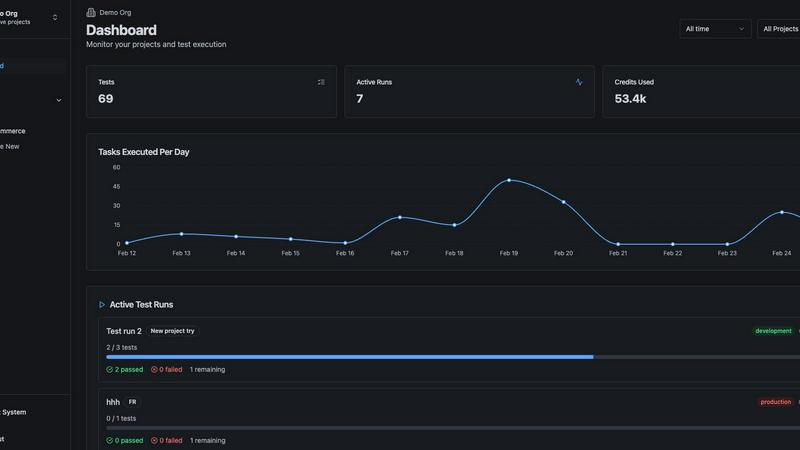

qtrl.ai

Feature Comparison

Agenta

Centralized Workflow Management

Agenta centralizes all prompts, evaluations, and traces in one platform, providing a single source of truth for your team. This feature eliminates the confusion caused by scattered workflows and allows for smoother collaboration and communication among team members.

Unified Playground

With Agenta's unified playground, teams can compare prompts and models side-by-side, enabling a more thorough evaluation of changes. This feature includes complete version history, ensuring that teams can track modifications and understand the evolution of their prompts over time.

Automated Evaluation

Replace guesswork with data-driven insights through Agenta's automated evaluation capabilities. This feature allows teams to systematically run experiments, track results, and validate every change, fostering a culture of evidence-based decision-making within the development process.

Enhanced Observability

Agenta provides robust observability tools that allow teams to trace every request and pinpoint exact failure points. This feature includes the ability to annotate traces for team collaboration and gather user feedback, ultimately leading to more informed debugging and improved system performance.

qtrl.ai

Autonomous QA Agents

Move beyond script maintenance nightmares. qtrl.ai deploys intelligent agents that operate on-demand or continuously, executing precise instructions across multiple environments at scale. Crucially, they work within your defined rules and execute in real browsers, not simulations, ensuring authentic user experience validation. This is AI you can actually trust to handle repetitive, complex workflows without going rogue.

Enterprise-Grade Test Management

Governance isn't an afterthought—it's the core. qtrl.ai provides a centralized command hub for all QA activities. Structure test cases, plans, and runs with full traceability from requirement to release. Built-in audit trails and compliance-ready workflows ensure every change is tracked, making it the secure, single source of truth for engineering leads and QA managers demanding visibility and control.

Progressive Automation

Ditch the all-or-nothing AI dilemma. qtrl.ai’s philosophy is gradual empowerment. Start by writing high-level test instructions yourself. Then, seamlessly transition to letting AI generate detailed tests from your requirements—all fully reviewable and approvable. The platform even analyzes coverage gaps and suggests new tests, putting you in the driver's seat for every step toward increased automation.

Adaptive Memory & Multi-Environment Execution

This is where qtrl.ai gets smarter with every interaction. Its Adaptive Memory builds a living knowledge base of your application, learning from exploration and test runs to power context-aware test generation. Coupled with robust multi-environment execution—supporting dev, staging, and prod with per-environment variables and encrypted secrets—it ensures tests are both intelligent and executed securely where it matters.

Use Cases

Agenta

Collaborative LLM Development

Agenta is ideal for AI teams looking to streamline their collaborative efforts in developing LLM applications. By providing a centralized platform, team members can work together more effectively, reducing silos and enhancing productivity.

Structured Experimentation

Teams can use Agenta to conduct structured experiments with their prompts. The platform's automated evaluation tools allow for quick iterations and data-driven decisions, making it easier to refine and enhance LLM applications continuously.

Efficient Debugging

When errors occur, Agenta's observability features enable teams to debug efficiently. By tracing requests and pinpointing failure points, teams can address issues rapidly without resorting to guesswork, thus improving overall application reliability.

Domain Expert Involvement

Agenta empowers domain experts to engage directly in the LLM development process. With a user-friendly interface that allows for safe editing and experimentation with prompts, experts can contribute valuable insights without needing extensive coding knowledge.

qtrl.ai

Scaling Beyond Manual Testing

For QA teams drowning in repetitive manual checklists, qtrl.ai is the lifeline. It allows teams to start by structuring their existing manual cases in the platform, then progressively automate the most tedious flows using AI agents. This creates immediate efficiency gains, frees up human testers for complex exploratory work, and provides a clear, manageable path to a hybrid automation strategy without a risky, overnight overhaul.

Modernizing Legacy QA Workflows

Companies stuck with fragmented tools—separate test case repos, siloed automation scripts, and manual reporting—can consolidate onto qtrl.ai. It replaces the patchwork with a unified platform that integrates test management, automation, and execution. This breaks down silos, introduces much-needed traceability and auditability, and injects modern AI capabilities into outdated processes, all while maintaining strict governance.

Governing Enterprise AI Testing

Enterprises that want to leverage AI but cannot afford "black-box" unpredictability find their solution here. qtrl.ai offers permissioned autonomy levels and full agent visibility, ensuring AI operates within strict compliance guardrails. Teams can leverage AI for test generation and execution at scale across global browsers, all while maintaining full audit trails, satisfying security reviews, and meeting rigorous regulatory requirements.

Empowering Product-Led Engineering Teams

Development teams embracing a product-led growth model need quality to keep pace with rapid, user-focused iteration. qtrl.ai integrates directly into CI/CD pipelines, providing continuous quality feedback. Engineers can write high-level test instructions or connect requirements, and qtrl.ai handles the rest, ensuring new features are validated quickly and reliably without creating automation debt, thus accelerating release cycles safely.

Overview

About Agenta

Agenta is a cutting-edge open-source LLMOps platform designed to revolutionize how AI teams build and deploy large language model (LLM) applications. As the landscape of AI continues to evolve, the unpredictability of LLMs poses significant challenges for development teams. Agenta provides a structured framework that bridges the gap between developers and subject matter experts, fostering collaboration and ensuring a seamless integration of workflows. By centralizing all components of the development process, Agenta eliminates the chaos of scattered prompts and communications across disparate tools like Slack, Google Sheets, and emails. With features such as integrated prompt management, automated evaluations, and enhanced observability, Agenta empowers teams to iterate quickly and effectively. This systematic approach not only simplifies debugging but also enhances the reliability and performance of AI applications. Ultimately, Agenta transforms the LLM development process into a predictable and efficient practice, making it an essential tool for modern AI teams.

About qtrl.ai

The QA landscape is at a breaking point. Manual testing crumbles under agile velocity, while traditional automation is a brittle, resource-heavy beast. Enter qtrl.ai, the definitive answer for teams refusing to compromise. This isn't just another test runner; it's a progressive AI-powered QA platform engineered to scale quality intelligently, without the terrifying "black-box" risks of fully autonomous AI. qtrl.ai masterfully bridges the critical gap, offering enterprise-grade test management as its rock-solid foundation. Here, teams organize test cases, plan runs, trace requirements, and track real-time metrics with full governance. But the real game-changer is its layered AI. You start with total control—simple manual management or human-written instructions. When ready, you progressively unlock autonomous agents that generate and maintain UI tests from plain English, executing them at scale across real browsers. Built for product-led engineering teams, QA groups scaling beyond manual, and compliance-focused enterprises, qtrl.ai delivers a trusted, transparent path from structured oversight to intelligent automation. It’s the control tower for modern quality assurance.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps refers to the set of practices and tools used to manage the development and operation of large language models. It emphasizes collaboration, efficiency, and systematic workflows to ensure high-quality AI applications.

How does Agenta enhance collaboration?

Agenta enhances collaboration by centralizing all development activities in one platform, allowing product managers, developers, and domain experts to work together seamlessly. This eliminates silos and fosters a more integrated approach to LLM development.

Can Agenta integrate with other tools?

Yes, Agenta is designed to integrate seamlessly with various frameworks and models, including LangChain, LlamaIndex, and OpenAI. This flexibility ensures that teams can utilize their preferred tools without facing vendor lock-in.

Is Agenta suitable for large teams?

Absolutely. Agenta is tailored for AI teams of all sizes. Its collaborative features, centralized workflows, and robust evaluation tools make it an ideal solution for large teams looking to streamline their LLM development processes.

qtrl.ai FAQ

How does qtrl.ai's AI differ from other "autonomous" testing tools?

Alert: Many tools force a risky, AI-first approach where the AI makes opaque decisions. qtrl.ai is built on a principle of "permissioned autonomy." Its AI agents operate strictly within rules and instructions you define. You maintain full visibility into every action, and all AI-generated tests are reviewable and approvable. It's AI augmentation, not AI replacement, designed to earn trust through transparency and control.

Can we use qtrl.ai if we currently only do manual testing?

Absolutely, and that's the recommended starting point. qtrl.ai's platform is designed for progression. You can begin by using its robust test management features to organize and execute your manual test cases and plans. This gives you immediate value and a structured foundation. When your team is ready, you can gradually introduce AI-powered automation for specific flows, all within the same centralized platform.

Is our data secure, especially when using AI agents?

Security is non-negotiable. qtrl.ai is built with enterprise-grade safeguards. For AI operations, your application secrets and sensitive environment variables are encrypted and never exposed to the AI agents. The platform operates with full audit trails and is designed for compliance. You maintain sovereignty over your data and test assets at all times, with granular control over who can access and trigger automation.

How does qtrl.ai handle tests when our application UI changes?

This is a classic automation pain point. qtrl.ai's Adaptive Memory and intelligent agents are designed for resilience. The system learns from your application and past test executions. When changes occur, the AI can often suggest maintenance actions or adapt tests based on context. Furthermore, because you start with high-level instructions (e.g., "log in as a customer"), rather than brittle, code-level selectors, tests are more durable and easier to update.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed for AI teams, focusing on the centralized management and evaluation of prompts to enhance the reliability of large language model applications. As organizations increasingly turn to LLMs for various use cases, users often seek alternatives to Agenta due to factors like pricing, specific feature requirements, or compatibility with existing workflows. The search for an alternative usually centers around finding a platform that not only meets budget constraints but also offers robust functionalities that align with the team's specific needs, whether that be collaboration tools, evaluation capabilities, or ease of integration. When choosing an alternative, it's crucial to consider factors such as the platform’s user interface, support for collaborative development, the depth of analytics and observability tools, and the overall flexibility in managing LLM workflows. Additionally, assessing the community support and resources available for troubleshooting can be vital in making an informed decision that enhances your team’s productivity and efficiency in AI application development.

qtrl.ai Alternatives

qtrl.ai is a trending AI-powered QA platform in the automation and dev tools space. It helps teams scale testing with intelligent agents while maintaining crucial governance and control over the entire process. This hybrid approach is gaining traction as teams seek to modernize without the risks of full AI autonomy. Users often explore alternatives for several key reasons. Budget constraints, specific integration needs with existing CI/CD pipelines, or a desire for a different balance between AI automation and traditional scripting can drive the search. The need for specialized testing types, like performance or security, also plays a role. When evaluating other options, focus on the core pillars: governance, integration depth, and AI transparency. Look for platforms that offer robust audit trails, seamless fit with your tech stack, and clear visibility into how AI agents generate and maintain tests. The goal is to accelerate release cycles without introducing new bottlenecks or compliance headaches.