CloudBurn vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

CloudBurn

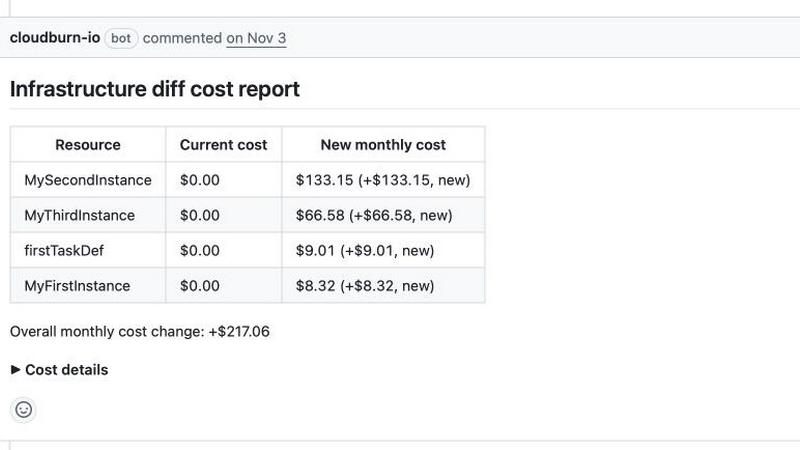

CloudBurn shows AWS cost estimates in pull requests to prevent costly mistakes before deployment.

Last updated: March 1, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

CloudBurn

OpenMark AI

Overview

About CloudBurn

CloudBurn is the essential early-warning system for your cloud infrastructure, designed to stop budget-busting AWS bills before they ever happen. In the fast-paced world of modern DevOps, where infrastructure is defined as code with tools like Terraform and AWS CDK, a single misconfigured resource can silently add thousands to your monthly invoice. Traditional cost monitoring tools act as an autopsy report, showing you the damage weeks after deployment when it's too late and expensive to change. CloudBurn flips this model on its head. It integrates directly into your GitHub CI/CD workflow to provide real-time, pre-deployment cost analysis for every pull request. By automatically posting a detailed cost impact report on each PR, it empowers developers and engineering teams to see the exact financial consequences of their code changes. This creates a powerful culture of cost accountability, shifting FinOps left and making cost awareness a non-negotiable part of the code review process. For engineering leaders, CloudBurn delivers immediate ROI by preventing costly misconfigurations from ever reaching production, ensuring infrastructure decisions are informed, optimized, and aligned with business budgets from the very first commit.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.