Fallom vs qtrl.ai

Side-by-side comparison to help you choose the right tool.

Fallom provides real-time observability and cost tracking for your AI agents.

Last updated: February 28, 2026

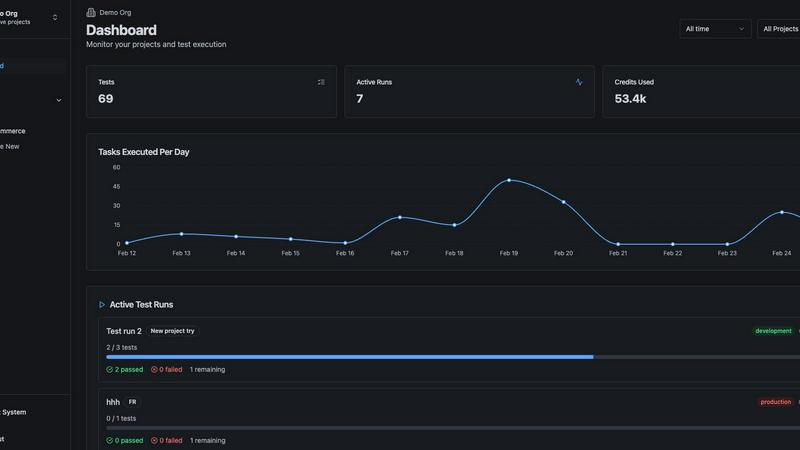

qtrl.ai

Scale QA with AI agents while keeping full control and governance.

Last updated: March 4, 2026

Visual Comparison

Fallom

qtrl.ai

Feature Comparison

Fallom

End-to-End LLM Tracing & Live Dashboard

Gain real-time, granular visibility into every AI interaction. Fallom's live dashboard shows a streaming feed of all LLM calls, capturing the full context: the exact input prompt, the model used, token counts, latency, and calculated cost. You can click into any trace to see the complete chain, including intermediate steps and tool calls. This instant, detailed observability is critical for spotting anomalies, understanding user behavior, and ensuring your AI features are performing as expected in the wild.

Enterprise Compliance & Audit Trails

Navigate the complex landscape of AI regulation with confidence. Fallom is built for compliance, offering immutable, complete audit trails of every LLM interaction. This includes full input/output logging, model versioning, and user consent tracking—essential for adhering to the EU AI Act, GDPR, and SOC 2 requirements. A dedicated Privacy Mode allows you to disable content capture for sensitive data, maintaining full telemetry while protecting user privacy and confidential information.

Advanced Cost Attribution & Analytics

Take control of your spiraling AI spend. Fallom automatically attributes costs across every dimension: per model, per user, per team, and per customer. The platform provides clear dashboards and breakdowns, showing exactly where your budget is going. This enables precise budgeting, internal chargeback, and data-driven decisions on model selection, helping you optimize for both cost and performance without any financial blind spots.

Timing Waterfalls & Tool Call Visibility

Debug the performance of multi-step AI agents with surgical precision. Fallom's timing waterfall visualizations break down the latency of each step in an agent's workflow, instantly pinpointing whether delays are in LLM calls, tool executions (like database queries or API calls), or your own code. Combined with full visibility into every tool call's arguments and results, you can quickly identify and resolve bottlenecks that impact user experience.

qtrl.ai

Autonomous QA Agents

Move beyond script maintenance nightmares. qtrl.ai deploys intelligent agents that operate on-demand or continuously, executing precise instructions across multiple environments at scale. Crucially, they work within your defined rules and execute in real browsers, not simulations, ensuring authentic user experience validation. This is AI you can actually trust to handle repetitive, complex workflows without going rogue.

Enterprise-Grade Test Management

Governance isn't an afterthought—it's the core. qtrl.ai provides a centralized command hub for all QA activities. Structure test cases, plans, and runs with full traceability from requirement to release. Built-in audit trails and compliance-ready workflows ensure every change is tracked, making it the secure, single source of truth for engineering leads and QA managers demanding visibility and control.

Progressive Automation

Ditch the all-or-nothing AI dilemma. qtrl.ai’s philosophy is gradual empowerment. Start by writing high-level test instructions yourself. Then, seamlessly transition to letting AI generate detailed tests from your requirements—all fully reviewable and approvable. The platform even analyzes coverage gaps and suggests new tests, putting you in the driver's seat for every step toward increased automation.

Adaptive Memory & Multi-Environment Execution

This is where qtrl.ai gets smarter with every interaction. Its Adaptive Memory builds a living knowledge base of your application, learning from exploration and test runs to power context-aware test generation. Coupled with robust multi-environment execution—supporting dev, staging, and prod with per-environment variables and encrypted secrets—it ensures tests are both intelligent and executed securely where it matters.

Use Cases

Fallom

Monitoring and Debugging Production AI Agents

When your customer-facing AI agent starts behaving oddly or timing out, you need answers fast. Fallom provides the complete picture, allowing you to trace a user's failed session end-to-end. See the exact prompts that led to an error, inspect the arguments passed to a faulty tool call, and analyze latency waterfalls to find the slow step. This turns hours of guesswork into minutes of targeted debugging, ensuring high reliability for your AI-powered features.

Ensuring Regulatory Compliance for AI Products

For companies in finance, healthcare, or any regulated industry, deploying AI comes with heavy compliance burdens. Fallom acts as your audit engine, automatically generating the required logs for every LLM interaction. You can prove which model version generated a specific output, demonstrate that user consent was captured, and maintain privacy-mode logs for sensitive operations, making audits for the EU AI Act or GDPR a streamlined process.

Controlling and Optimizing LLM Spend

With AI costs becoming a major line item, finance and engineering teams need transparency. Fallom answers critical questions: Is Team A's experimental feature burning budget on GPT-4? Which customer is the most expensive to serve? By providing detailed, attribute-level cost reporting, Fallom enables showback/chargeback models, helps teams choose cost-effective models for specific tasks, and identifies wasteful patterns before they impact the bottom line.

Performance Optimization and Model A/B Testing

Before rolling out a new, faster model like Claude 3.5 Sonnet, you need confidence it won't break things. Fallom's built-in A/B testing framework lets you safely split traffic between models, comparing their performance, cost, and output quality (via integrated evaluations) in real-time. Combined with timing waterfalls, you can validate latency improvements and switch traffic with confidence, ensuring continuous performance enhancement.

qtrl.ai

Scaling Beyond Manual Testing

For QA teams drowning in repetitive manual checklists, qtrl.ai is the lifeline. It allows teams to start by structuring their existing manual cases in the platform, then progressively automate the most tedious flows using AI agents. This creates immediate efficiency gains, frees up human testers for complex exploratory work, and provides a clear, manageable path to a hybrid automation strategy without a risky, overnight overhaul.

Modernizing Legacy QA Workflows

Companies stuck with fragmented tools—separate test case repos, siloed automation scripts, and manual reporting—can consolidate onto qtrl.ai. It replaces the patchwork with a unified platform that integrates test management, automation, and execution. This breaks down silos, introduces much-needed traceability and auditability, and injects modern AI capabilities into outdated processes, all while maintaining strict governance.

Governing Enterprise AI Testing

Enterprises that want to leverage AI but cannot afford "black-box" unpredictability find their solution here. qtrl.ai offers permissioned autonomy levels and full agent visibility, ensuring AI operates within strict compliance guardrails. Teams can leverage AI for test generation and execution at scale across global browsers, all while maintaining full audit trails, satisfying security reviews, and meeting rigorous regulatory requirements.

Empowering Product-Led Engineering Teams

Development teams embracing a product-led growth model need quality to keep pace with rapid, user-focused iteration. qtrl.ai integrates directly into CI/CD pipelines, providing continuous quality feedback. Engineers can write high-level test instructions or connect requirements, and qtrl.ai handles the rest, ensuring new features are validated quickly and reliably without creating automation debt, thus accelerating release cycles safely.

Overview

About Fallom

Fallom is the AI-native observability platform engineered for the new frontier of software: Large Language Model (LLM) and autonomous agent workloads. As enterprises rush to integrate generative AI into their core products, they're hitting a critical visibility wall. Traditional APM tools fall short, leaving teams flying blind on cost, performance, and compliance. Fallom shatters that barrier. It provides real-time, granular visibility into every single LLM call in production, delivering end-to-end tracing that captures prompts, outputs, tool calls, tokens, latency, and per-call costs. Built with enterprise-scale and regulatory rigor in mind, it adds crucial session, user, and customer-level context, transforming fragmented API calls into a coherent narrative of AI interactions. With its OpenTelemetry-native SDK, teams can instrument their entire AI stack in minutes, not months. Fallom is the definitive tool for engineering and product teams who need to monitor usage live, debug complex agentic workflows, attribute costs accurately, and maintain robust audit trails for frameworks like GDPR and the EU AI Act. It's not just monitoring; it's the command center for reliable, compliant, and cost-effective AI operations.

About qtrl.ai

The QA landscape is at a breaking point. Manual testing crumbles under agile velocity, while traditional automation is a brittle, resource-heavy beast. Enter qtrl.ai, the definitive answer for teams refusing to compromise. This isn't just another test runner; it's a progressive AI-powered QA platform engineered to scale quality intelligently, without the terrifying "black-box" risks of fully autonomous AI. qtrl.ai masterfully bridges the critical gap, offering enterprise-grade test management as its rock-solid foundation. Here, teams organize test cases, plan runs, trace requirements, and track real-time metrics with full governance. But the real game-changer is its layered AI. You start with total control—simple manual management or human-written instructions. When ready, you progressively unlock autonomous agents that generate and maintain UI tests from plain English, executing them at scale across real browsers. Built for product-led engineering teams, QA groups scaling beyond manual, and compliance-focused enterprises, qtrl.ai delivers a trusted, transparent path from structured oversight to intelligent automation. It’s the control tower for modern quality assurance.

Frequently Asked Questions

Fallom FAQ

How does Fallom integrate with my existing AI stack?

Fallom is built on OpenTelemetry, the open standard for observability. Integration is simple: add Fallom's single, lightweight SDK to your application. It automatically instruments calls to all major LLM providers (OpenAI, Anthropic, Google, etc.) and custom agents without vendor lock-in. You can be tracing live calls in under 5 minutes, with no changes to your core application logic.

How does Fallom handle sensitive or private user data?

Security and privacy are paramount. Fallom offers a configurable Privacy Mode. When enabled, you can choose to redact specific data fields, log only metadata (like token counts and latency), or disable content capture entirely for sensitive environments. This ensures you maintain full observability for performance and cost while complying with strict data protection policies.

Can I use Fallom to test and evaluate my LLM prompts?

Absolutely. Fallom includes a Prompt Store for versioning and managing your prompts. You can A/B test different prompt variations directly within the platform, deploying winning versions instantly. Furthermore, you can run automated evaluations (for accuracy, relevance, hallucination rates, etc.) on LLM outputs to catch regressions before they reach production users.

Is Fallom suitable for small startups or only large enterprises?

Fallom is built to scale from fast-moving startups to global enterprises. It offers a free tier to get started, which is perfect for small teams to gain immediate visibility. As your AI usage grows, its enterprise features—like advanced cost attribution, session tracking, and compliance tooling—become essential for managing complexity, cost, and risk at scale.

qtrl.ai FAQ

How does qtrl.ai's AI differ from other "autonomous" testing tools?

Alert: Many tools force a risky, AI-first approach where the AI makes opaque decisions. qtrl.ai is built on a principle of "permissioned autonomy." Its AI agents operate strictly within rules and instructions you define. You maintain full visibility into every action, and all AI-generated tests are reviewable and approvable. It's AI augmentation, not AI replacement, designed to earn trust through transparency and control.

Can we use qtrl.ai if we currently only do manual testing?

Absolutely, and that's the recommended starting point. qtrl.ai's platform is designed for progression. You can begin by using its robust test management features to organize and execute your manual test cases and plans. This gives you immediate value and a structured foundation. When your team is ready, you can gradually introduce AI-powered automation for specific flows, all within the same centralized platform.

Is our data secure, especially when using AI agents?

Security is non-negotiable. qtrl.ai is built with enterprise-grade safeguards. For AI operations, your application secrets and sensitive environment variables are encrypted and never exposed to the AI agents. The platform operates with full audit trails and is designed for compliance. You maintain sovereignty over your data and test assets at all times, with granular control over who can access and trigger automation.

How does qtrl.ai handle tests when our application UI changes?

This is a classic automation pain point. qtrl.ai's Adaptive Memory and intelligent agents are designed for resilience. The system learns from your application and past test executions. When changes occur, the AI can often suggest maintenance actions or adapt tests based on context. Furthermore, because you start with high-level instructions (e.g., "log in as a customer"), rather than brittle, code-level selectors, tests are more durable and easier to update.

Alternatives

Fallom Alternatives

Fallom is a leading AI-native observability platform, squarely in the category of tools designed for monitoring, debugging, and managing LLM and AI agent workloads in production. As this space explodes, teams are actively scouting the landscape for options that better fit their specific stack, budget constraints, or require a different blend of features like deeper integration with existing APM tools or more granular data retention policies. When evaluating alternatives, the key is to match the tool to your operational reality. Look for robust real-time tracing that covers the full agent chain—prompts, tool calls, and costs. Enterprise teams must prioritize compliance readiness with audit trails and session context, while startups might seek more flexible pricing. The ideal platform should integrate seamlessly without becoming a development bottleneck. Ultimately, the right observability solution should turn opaque AI operations into a clear, actionable dashboard. It's not just about logging calls; it's about gaining the insights to improve performance, control spend, and ensure reliable, compliant AI deployments that scale with your ambitions.

qtrl.ai Alternatives

qtrl.ai is a trending AI-powered QA platform in the automation and dev tools space. It helps teams scale testing with intelligent agents while maintaining crucial governance and control over the entire process. This hybrid approach is gaining traction as teams seek to modernize without the risks of full AI autonomy. Users often explore alternatives for several key reasons. Budget constraints, specific integration needs with existing CI/CD pipelines, or a desire for a different balance between AI automation and traditional scripting can drive the search. The need for specialized testing types, like performance or security, also plays a role. When evaluating other options, focus on the core pillars: governance, integration depth, and AI transparency. Look for platforms that offer robust audit trails, seamless fit with your tech stack, and clear visibility into how AI agents generate and maintain tests. The goal is to accelerate release cycles without introducing new bottlenecks or compliance headaches.