OGimagen vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

OGImagen instantly creates perfect, AI-generated Open Graph images and meta tags for every social platform.

Last updated: March 11, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

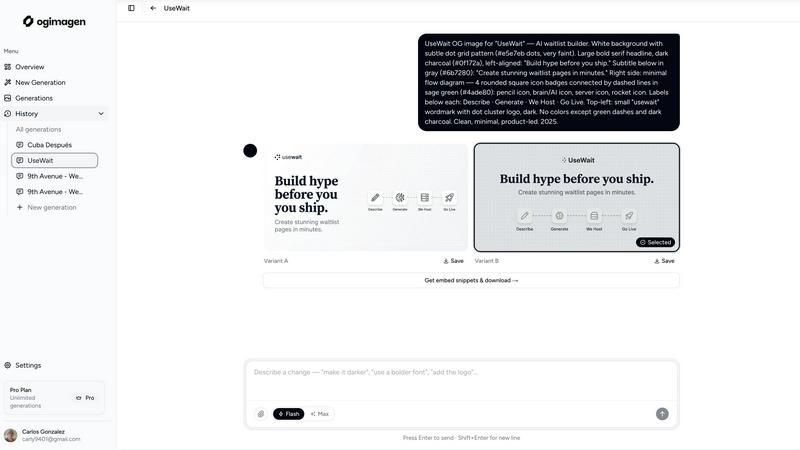

OGimagen

OpenMark AI

Overview

About OGimagen

In the hyper-competitive landscape of social media and digital sharing, your link's preview image is your first and most critical impression. OGimagen is the AI-powered engine that automates and perfects this process. It's built for developers, content creators, and marketing teams who need to generate stunning, platform-optimized Open Graph, Twitter, and LinkedIn card images in seconds, directly from text. Forget wrestling with design tools or settling for bland templates. OGimagen uses artificial intelligence to interpret your title and description, creating two unique, visually compelling variants that capture attention. Beyond just image generation, it delivers the complete package: production-ready images hosted on a global Cloudflare CDN and the exact meta tag snippets you need for your framework (Next.js, Astro, SvelteKit, etc.), ready to copy-paste into your code. Its cutting-edge MCP (Model Context Protocol) integration lets you generate and manage images without leaving your AI-powered editor like Cursor or Claude Code. OGimagen transforms a tedious, multi-step design and dev task into a seamless, three-click workflow, ensuring every shared link looks professional and drives higher click-through rates with zero design skills required.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.