Lovalingo vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

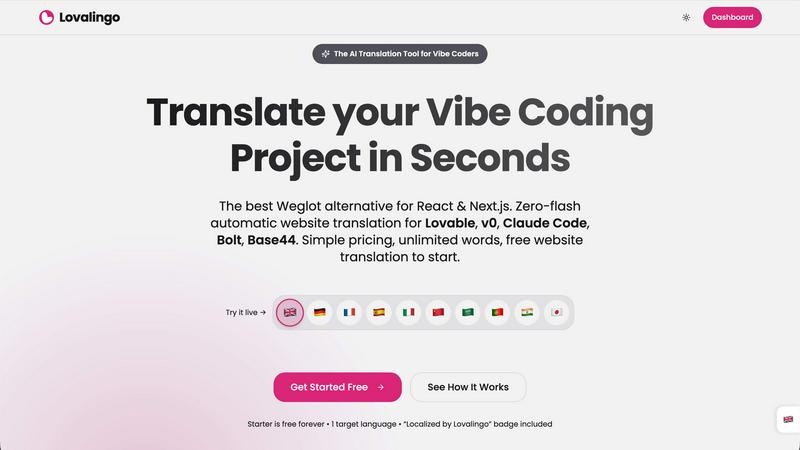

Lovalingo

Instantly translate and index your React app with zero flash and automated SEO.

Last updated: February 28, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

Lovalingo

OpenMark AI

Overview

About Lovalingo

Lovalingo is the AI-native translation layer built for the next generation of developers. It completely eliminates the traditional, painful i18n workflow of managing JSON files, broken layouts, and SEO headaches. Designed specifically for "vibe coders" using AI-powered tools like Lovable, v0, Claude Code, Bolt, and Base44, Lovalingo automates the entire process. It provides render-native, zero-flash translation that integrates directly into your React or Next.js application's render flow, not as a post-load DOM hack. This means your app scales to any language instantly with a single prompt, offering native SEO capabilities like automatic hreflang tags and sitemaps from day one. It's for SaaS founders targeting global markets, agencies building on modern AI platforms, and any developer who wants to ship multilingual features without the manual maintenance bottleneck.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.